The Token Test: How the Best Companies Will Hire Engineers from Now On

"Tokenmaxxing" is a Vanity Metric. While Big Tech burns billions on AI tokens, the best companies are hiring for efficiency. Discover why the "Token Test" can be the new gold standard for hiring.

Have you heard of the term Tokenmaxxing - a new weird trend being followed at companies like Meta, Salesforce, Microsoft etc.. In 30 days alone, Meta employees burned through 60.2 trillion tokens, which at standard API pricing would cost approximately $900 million.

But I have a counter argument against that trend. It’s not something everyone can afford and I think that’s not what a good engineer will be evaluated on.

The landscape of software engineering is shifting. As AI models like Claude, ChatGPT, Gemini and Opensource models like Kimi, GLM, Qwen etc.. become commoditized, the act of writing code is no longer the primary differentiator of a “good” engineer. When everyone has access to the same world-class coding intelligence, the focus shifts from the how to the what, the why, and—most importantly—the cost.

We are entering an era of “Economic Engineering,” where the elite developers will be those who can navigate a world of token budgets, proprietary agent skills, and complex architectural judgment. But here’s the catch: while large companies can afford to waste tokens on leaderboard games, most organizations cannot. The real competitive edge belongs to engineers who understand token efficiency as a first-class concern—not as a vanity metric, but as a core measure of engineering excellence.

In the future, hiring will be driven by efficiency rather than just logic. Companies will move toward a model where engineers are evaluated on their ability to optimize the resources required to build a product or a feature.

1. The Rise of Token Economics: Why "Tokenmaxxing" is a Wrong Metric

The future of hiring is straightforward:

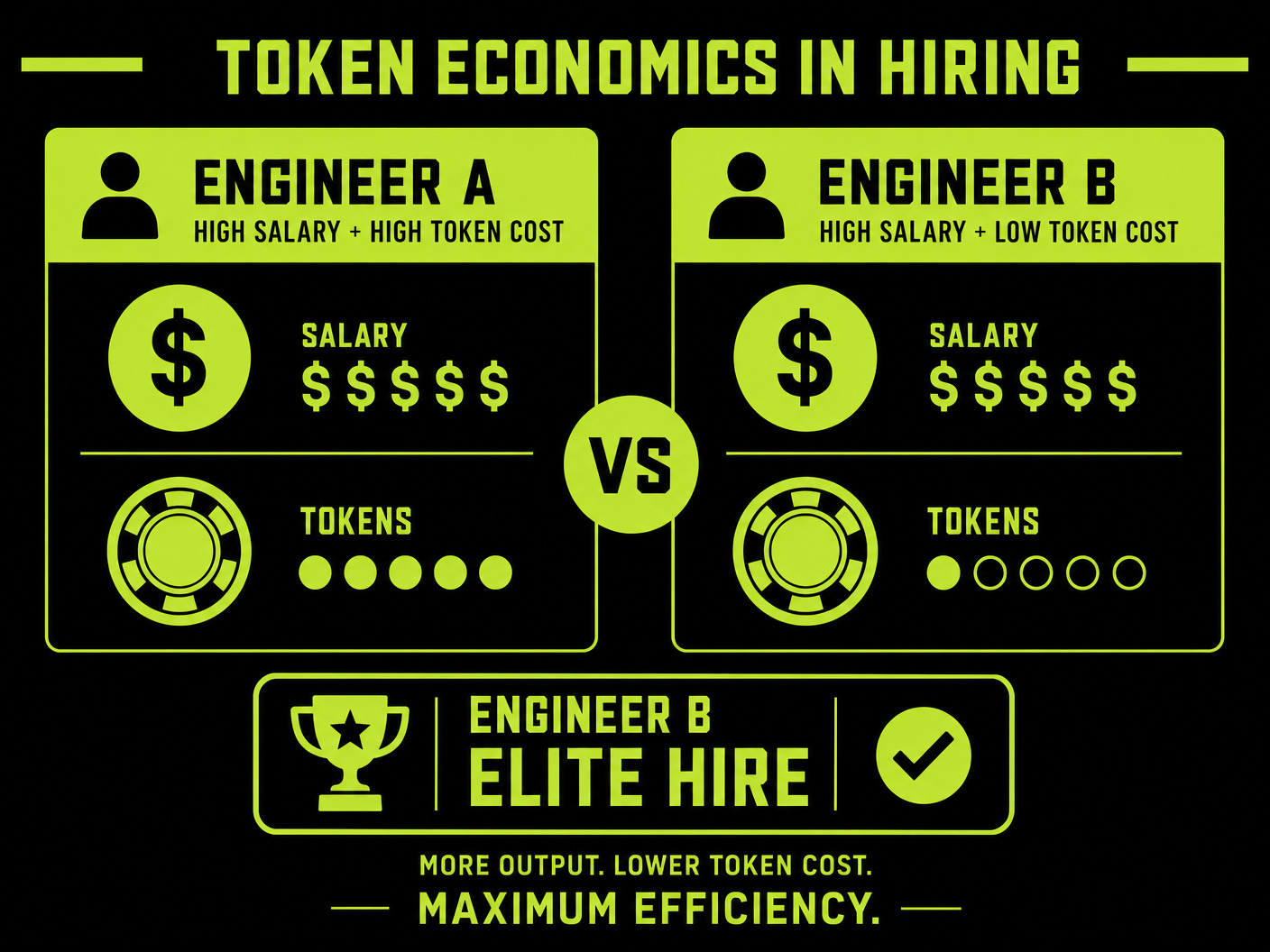

Give engineers a real task and ask them to build the same features in the most cost-efficient and token-efficient way. Whoever builds the same requirement consuming the lowest tokens at the lowest cost is the one you hire. This is where their talents and abilities truly surface.

The total cost of an engineer will no longer be limited to their salary. Instead, it will encompass their “token footprint.” A developer who builds a feature at a fraction of the token cost of another is inherently more valuable in a world where running AI infrastructure is a major operational expense.

Your overall cost of hiring isn’t just about the CTC—it’s also about how efficiently they use tokens. Because the same features built by someone else might cost significantly more or less to generate.

This shift is already happening in real companies but in a wrong way.

At Meta, an engineer created an internal “token leaderboard” that ranks employees by token usage, awarding titles like “Token Legend” and “Session Immortal” to top users.

Tokenmaxxing is a recent trend in the tech industry, particularly within Big Tech companies like Meta, Microsoft, and Salesforce, where employees compete to consume as many AI tokens as possible.

It is the AI-era equivalent of "lines of code"—a metric used to measure productivity that is easily gamed and often leads to significant waste. Just as developers once wrote bloated code to appear "productive," employees are now competing to consume as many AI tokens as possible to climb these boards.

If you would like to keep updated with interesting insights like these do subscribe

The problem? It’s created perverse incentives.

Engineers are burning tokens on throwaway projects, running wasteful agents, and asking AI to answer questions that documentation already covers—all to climb the leaderboard.

In 30 days alone, Meta employees burned through 60.2 trillion tokens, which at standard API pricing would cost approximately $900 million. Microsoft and Salesforce have similar leaderboards, with some engineers admitting they’re “tokenmaxxing” not because it produces better work, but because they fear being seen as insufficiently “AI-native.” Gergely Orosz documented this in detail in The Pulse: Tokenmaxxing as a Weird New Trend — it’s worth reading as a cautionary tale of what happens when companies measure the wrong thing.

This is the warning. The Token Test is the antidote.

The Token Test is about delivering the exact same results with the lowest token usage. It’s about measuring efficiency, not activity. This distinction matters because while large enterprises can absorb the waste of tokenmaxxing, most companies cannot. For startups, mid-market firms, and even many Fortune 500 divisions, token efficiency is the difference between sustainable AI infrastructure and unsustainable costs.

This aligns with the shift I discussed in The Judgment Premium, where the value moves from intelligence (writing code) to judgment (optimizing outcomes). But now there’s a quantifiable metric: tokens spent per unit of business value delivered.

2. Beyond Syntax: Hiring for Architectural Judgment in the AI Era

For decades, technical interviews focused on whether a candidate knew the nuances of Java loops or TypeScript types. In the post-coding era, these are commodities. The new core competency is high-level judgment.

Hiring will never be the same again. You’re not just evaluating technical skills of writing code—you’re judging skills like architectural decisions, system design, and data modeling. That’s the core area of the builder, not whether you know loops in Java or C++.

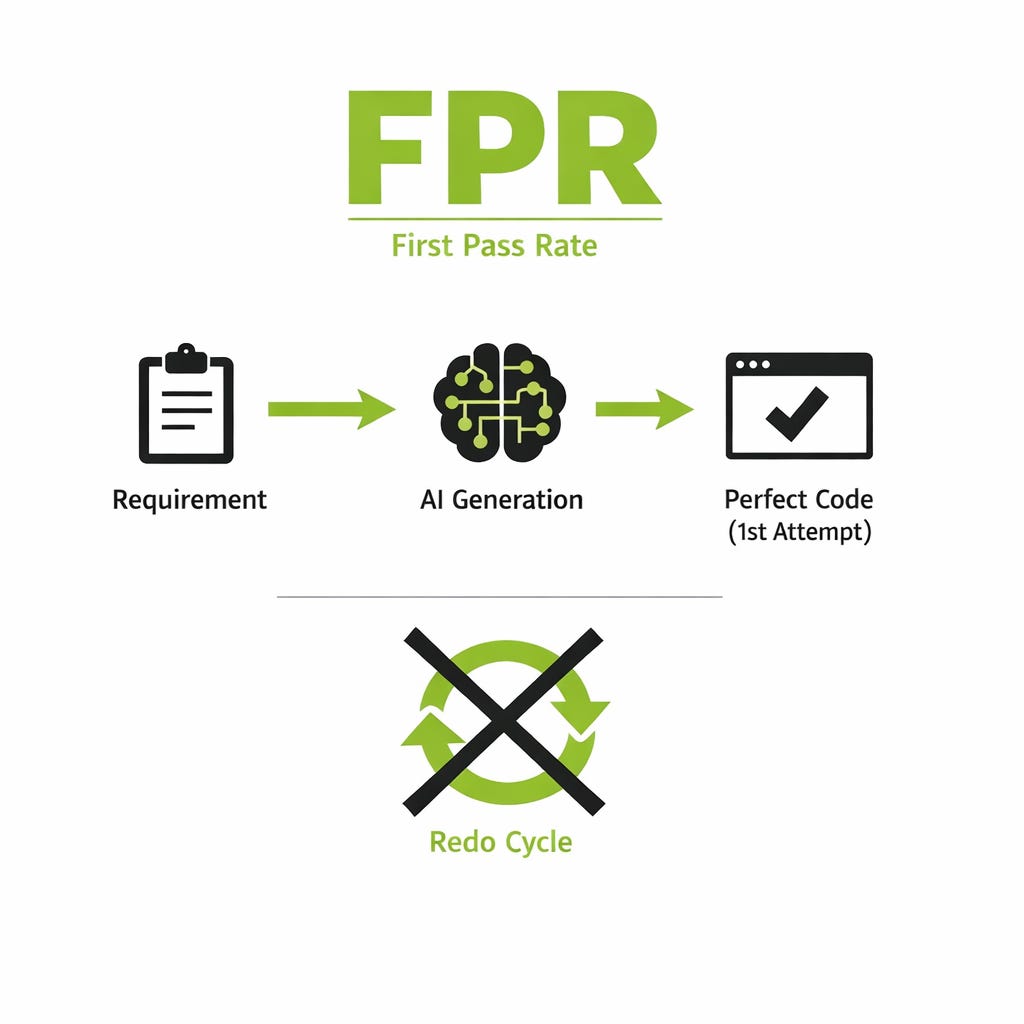

This shift prioritizes what we call the “First Pass Rate” (FPR).

We can build a system where you evaluate people on the ‘first pass rate’—if they get the code exactly as per requirements, meeting all edge cases, in the first generation without any redoing.

Success will be measured by the ability to visualize a system so clearly that the AI can execute it perfectly on the first attempt, eliminating the expensive “redo” cycles that plague modern development.

But here’s what makes this possible: context engineering. As I’ve explored in What is Context Engineering? (And Why It’s Better Than Prompt Engineering), the industry is shifting toward the discipline of building the entire information environment that an AI system needs to succeed. When an AI system fails in production, it’s rarely because the prompt needed better wording. It’s because the model didn’t see the right information, saw too much irrelevant information, or couldn’t carry the right state forward.

This is where the hiring criteria change. Companies will now evaluate engineers on nine core skills:

These nine skills are the practical implementation of context engineering. They’re what separates engineers who waste tokens from engineers who optimize them. And crucially, they’re what separates engineers who can work with AI systems from engineers who can architect them.

3. BYOM: Why the Future of Engineering is "Bring Your Own Model"

We will be moving from “Bring Your Own Device” to “Bring Your Own Model” (BYOM).

Engineers will no longer arrive at a company with just a laptop; they will arrive with a proprietary “digital brain” of fine-tuned skills.

We’re moving into an era of Bring Your Own Model. Every person will bring their own coding models—filtered versions of big models or smaller models they’ve built themselves to run in the company premises. This isn’t just about the model itself, but the accumulated “agent skills” a developer has refined over their career. Every AI developer will have their own ‘agent skills’ which they have fine-tuned over years or months. These are proprietary skills they can carry into any company they work with.

This is the next evolution of the Anthropic Skill Creator workflow—where your value is the library of specialized skills you’ve built and benchmarked. But more importantly, it’s where model orchestration becomes a competitive advantage.

An engineer who knows how to combine Claude for complex reasoning, GPT for speed, and open-source models for cost efficiency will always outperform an engineer who relies on a single model. They’ll deliver better results at a fraction of the token cost.

4. The New Engineering Skills: Reading AI Code and Context Management

As AI generates mountains of documentation and code, the human role becomes one of the “Ultimate Editor.” This requires two overlooked skills: high-stamina reading and context switching.

One critical skill that will become increasingly important is reading skill. AI generates mountains of English. You need the patience to read it through, visualize, and understand it before handing it over to the coding agent. You cannot always rely on AI to read for you.

Furthermore, the complexity of managing a fleet of autonomous agents requires a unique cognitive ability to maintain state across multiple workstreams. Context switching is the toughest part for a human. Imagine working with multiple agents—you need to keep track of which agent is doing what, and whether the context is being managed properly. This is harder than it sounds.

Managing this complexity is why reading skill and context management are now hiring criteria. An engineer who can read through AI-generated documentation, spot the signal in the noise, and maintain coherent state across multiple agents is worth significantly more than an engineer who can’t.

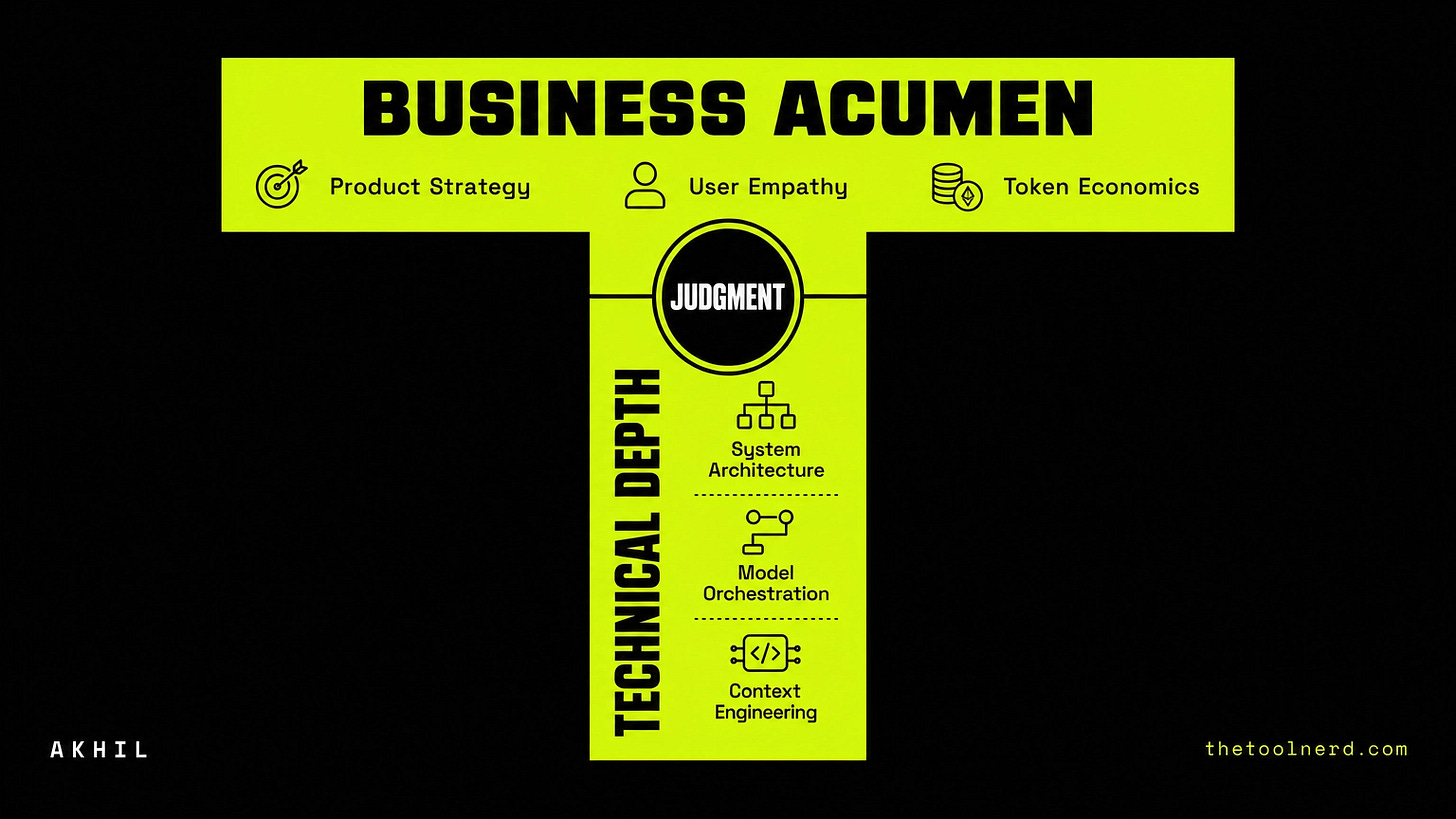

5. The T-Shaped Business Engineer: Bridging AI Code and Business Metrics

The most successful developers will be those who refuse to stay in a technical silo. To provide an LLM with the right context, an engineer must understand the business objective of the code they are requesting.

You need to have a taste for everything. You should be able to say what is a good design—you don’t need to be the expert, but you should understand it. Most developers don’t understand the business; they just write code. If you have to be relevant, you need to understand which metric the feature you are building is going to move.

By understanding the product metrics—the “why” behind the feature—the engineer can architect solutions that don’t just work, but actually drive company growth and user satisfaction. This is the T-shaped engineer: deep technical capability combined with broad business acumen. They understand that token efficiency isn’t just about cost—it’s about delivering business value faster and cheaper.

Conclusion

The era of the “coder” is ending; the era of the “AI Architect” has begun. In this new world, your value isn’t found in the lines of code you write, but in the precision of your judgment, the efficiency of your tokens, and your ability to steer complex systems toward business reality.

While companies like Meta are building leaderboards that reward token waste, the best organizations are building hiring processes that reward token efficiency. They’re measuring context engineering skill, not raw consumption. They’re looking for engineers who understand that in an era where intelligence is commoditized, the real edge is efficiency—the ability to deliver more value with fewer resources.

The Token Test is how they’ll find them.

References & Related Reading

This article builds on several key industry trends and insights:

Tokenmaxxing Trend: Gergely Orosz, The Pulse: Tokenmaxxing as a Weird New Trend (Pragmatic Engineer, April 2026) — documents how Meta, Microsoft, and Salesforce are creating token leaderboards and the perverse incentives they create. The cautionary data point: Meta burned 60.2 trillion tokens in 30 days, worth ~$900M at API prices.

Context Engineering Shift: What is Context Engineering? (And Why It’s Better Than Prompt Engineering) — My deep dive into why the industry is moving from prompt engineering to context engineering, and how to build the entire information environment for AI systems. The nine skills in this article are the practical implementation of that framework.

The Judgment Premium: My previous article on how the next $1T company will be built on judgment, not just intelligence—which directly connects to why token efficiency matters more than raw capability. -

Anthropic Skill Creator: The evolution of how developers build and carry proprietary skills across companies, which ties directly to the BYOM (Bring Your Own Model) era. https://www.thetoolnerd.com/p/anthropic-skill-creator-20-update

The Token Test isn’t just a hiring framework. It’s a recognition that in a world where AI capabilities are increasingly commoditized, the real differentiator is how efficiently you use them. That’s the future of engineering.