If You’re Using AI to Screen Resumes, You’re Optimizing for the Wrong People

How automated resume screening is filtering out your best candidates. AI resume screening tools optimize for presentation, not capability. Discover the hidden costs of AI hiring bias and how to fix.

If you’re using AI to screen resumes, you’re likely filtering out the exact people you need.

Can you actually evaluate talent through a resume?

Most hiring leaders would say “NO”. Yet, we’ve built an entire industry around it. Applicant Tracking Systems, resume screening tools, keyword matching algorithms- all designed to make resume evaluation faster, smarter, more objective.

But here’s what’s actually happening:

We’re not evaluating talent anymore. We’re evaluating presentation.

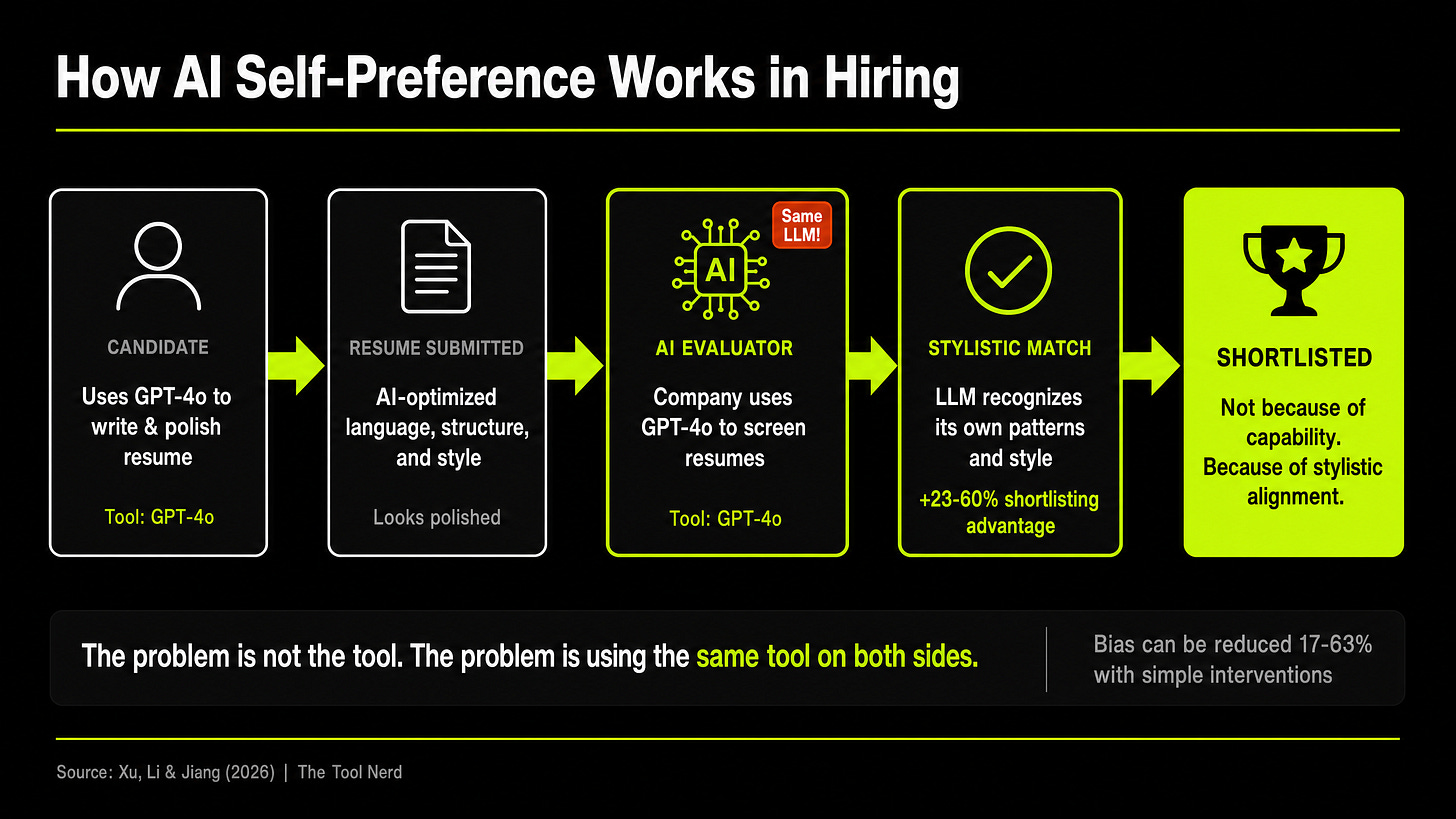

And now that AI has entered the picture, we’re not even evaluating presentation well. We’re evaluating how well a resume matches the stylistic patterns of the AI tool doing the evaluation.

The research now proves it:

When AI evaluators shortlist candidates, they favor their own writing style by up to 82%.

Vasumathi (Associate Director HR at Altir) and I have been discussing a shift in the hiring landscape where “presentation” has completely replaced “judgment” and how AI-based hiring systems might be stopping you from hiring the right candidate.

I have co-authored this article with her.

Now, to understand why this is happening, we have to look at how we got here.

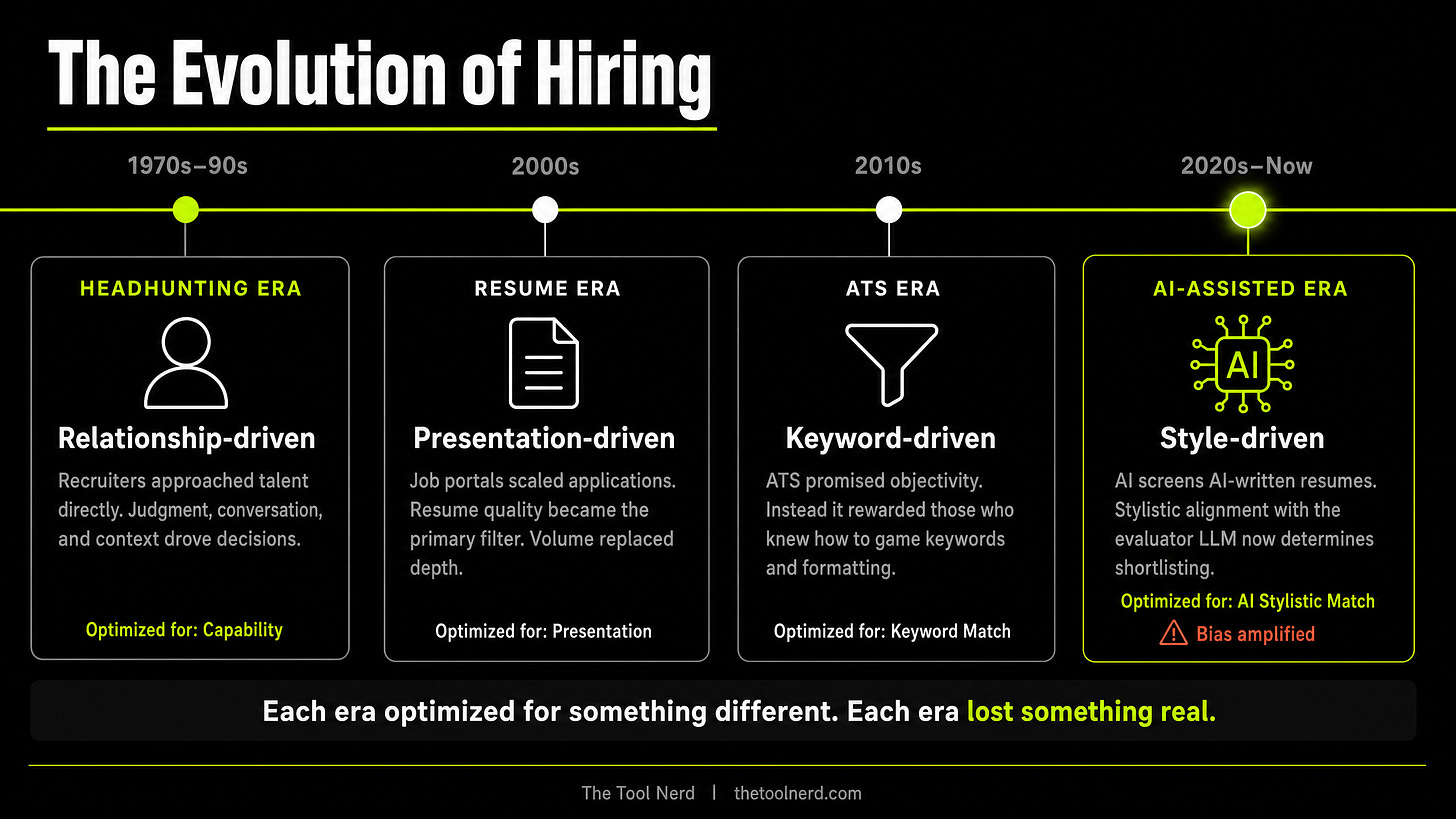

How Hiring Broke: The Shift to Automated ATS and AI Evaluators

Hiring didn’t suddenly become broken. It degraded gradually, with each new system optimising for something slightly different from the last.

The Headhunting Era

There was once a time when recruitment largely depended on headhunting. Recruiters and talent specialists actively approached skilled professionals. They built relationships. They assessed capabilities through conversations. Hiring decisions relied heavily on human judgment, industry understanding, and the ability to identify potential beyond a document.

The problem? It didn’t scale.

The Resume-Driven Era

With the rise of job portals, the hiring process became more resume-driven. Candidates invested significant time building their resumes. The quality of resume content became one of the primary filters for recruiters.

A single job posting started attracting hundreds, sometimes thousands, of resumes. Recruitment gradually became a race against time, attention span, and luck. Often, the resumes reviewed first had a greater chance of selection, and candidates who were better at presenting content had a stronger advantage regardless of actual capability.

Candidates who were better at presenting content had a stronger advantage regardless of actual capability.

The ATS Era

Then came Applicant Tracking Systems (ATS). They promised to solve the volume problem. But ATS had a limitation: It could only parse what was on the page. Candidates learned to optimise with keyword stuffing and formatting tricks. Presentation became even more important than substance.

The AI-Assisted Era

Today, hiring has entered another phase. Most Applicant Tracking Systems (ATS) now integrate AI-powered screening tools that promise to reduce recruiter effort by 50% or more.

However, this raises an important question

“Is AI truly solving the hiring problem, or simply accelerating an already flawed filtering process?”

We’ve actually seen this movie before. Back in 2018, Amazon famously had to scrap a secret AI recruiting tool because it was actively penalizing resumes containing the word "women's." The model had trained itself to favor male candidates based on 10 years of historical tech-industry resumes.

We assumed the AI got smarter since then. But today, candidates are no longer writing resumes independently. AI tools are now generating highly polished, optimised, and role-specific resumes within minutes. Even job portals now offer AI-assisted resume writing as a premium feature.

As a result, almost every candidate today appears “well-written” on paper, even when practical expertise may be limited.

This brings us to the uncomfortable research that proves what many HR leaders already suspected.

AI Hiring Bias Research: Why LLMs Prefer Their Own Resumes

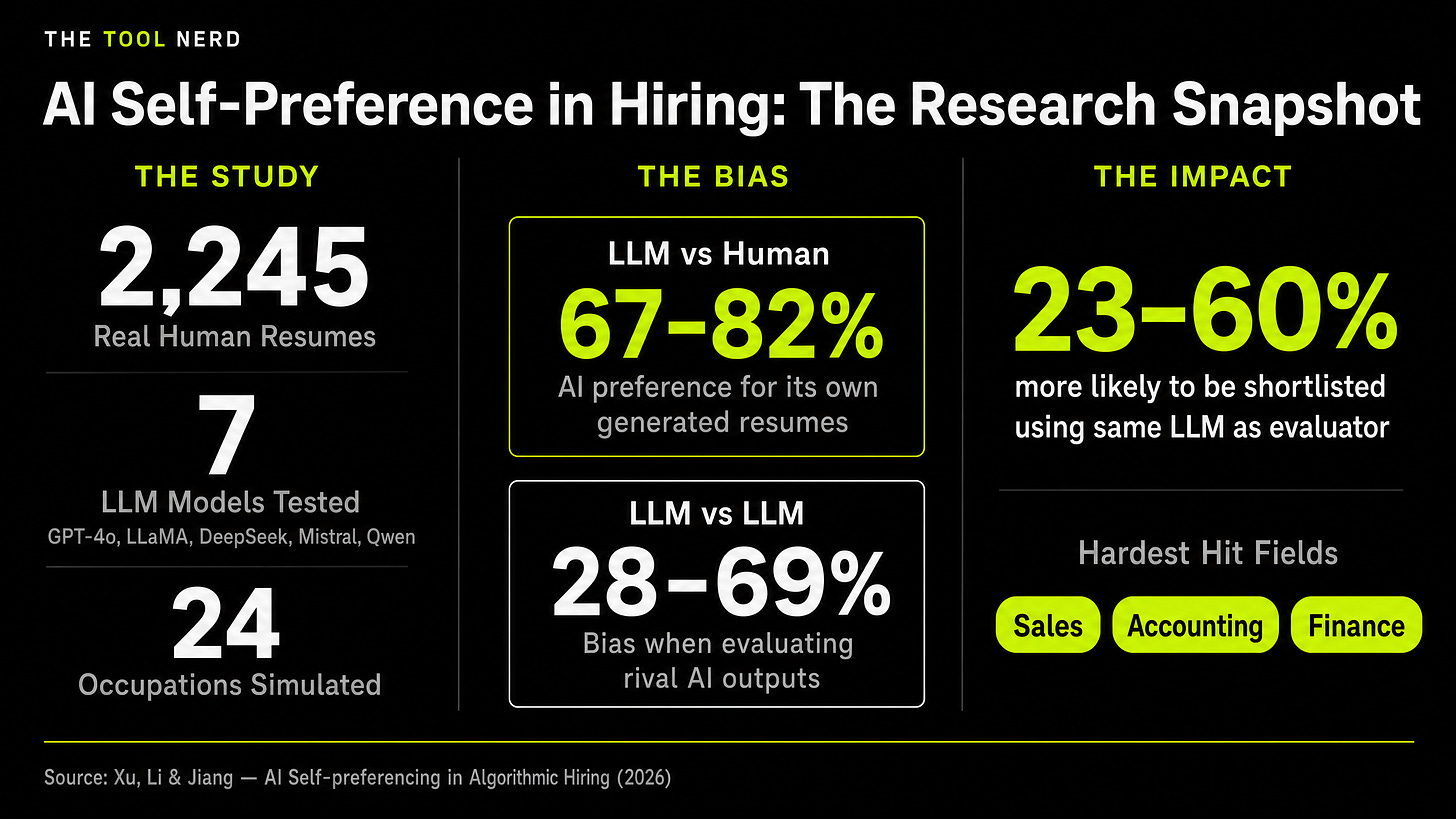

Researchers at the University of Maryland, National University of Singapore, and Ohio State University conducted a large-scale resume correspondence experiment using 2,245 real resumes sourced from a professional resume-building platform - collected before widespread AI adoption.

For each resume, they generated multiple counterparts using seven different LLM models: GPT-4o, GPT-4o-mini, GPT-4-turbo, LLaMA 3.3-70B, Mistral-7B, Qwen 2.5-72B, and Deepseek-V3.

Then they tested whether these LLMs, when acting as evaluators, systematically favoured their own generated content.

The answer: Yes. Dramatically.

LLMs showed strong and consistent preference for resumes they generated over human-written equivalents.

Larger models like GPT-4o, GPT-4-turbo, DeepSeek-V3, Qwen-2.5-72B, and LLaMA 3.3-70B exhibited particularly strong bias, exceeding 65% even after controlling for content quality.

Larger the model, the stronger the bias

They then simulated realistic hiring pipelines across 24 different occupations.

The disadvantage was most severe in business-related fields: sales, accounting, and finance.

Evaluations focus less on a candidate’s actual skills and more on whether their style matches what the evaluator LLM expects.

Using AI for screening resumes is like hiring someone not because they’re qualified, but because you know them. It’s a traditional HR problem- the kind of bias that existed long before AI- except now it’s automated and amplified at scale.

The Real Problem with AI in Recruitment: Presentation vs. Capability

The real issue isn’t just that AI prefers its own outputs. The deeper issue is that we’re evaluating the wrong traits entirely.

"If AI is being used to create resumes and AI is also being used to evaluate them, can organizations truly rely on AI-generated evaluations as indicators of capability?"

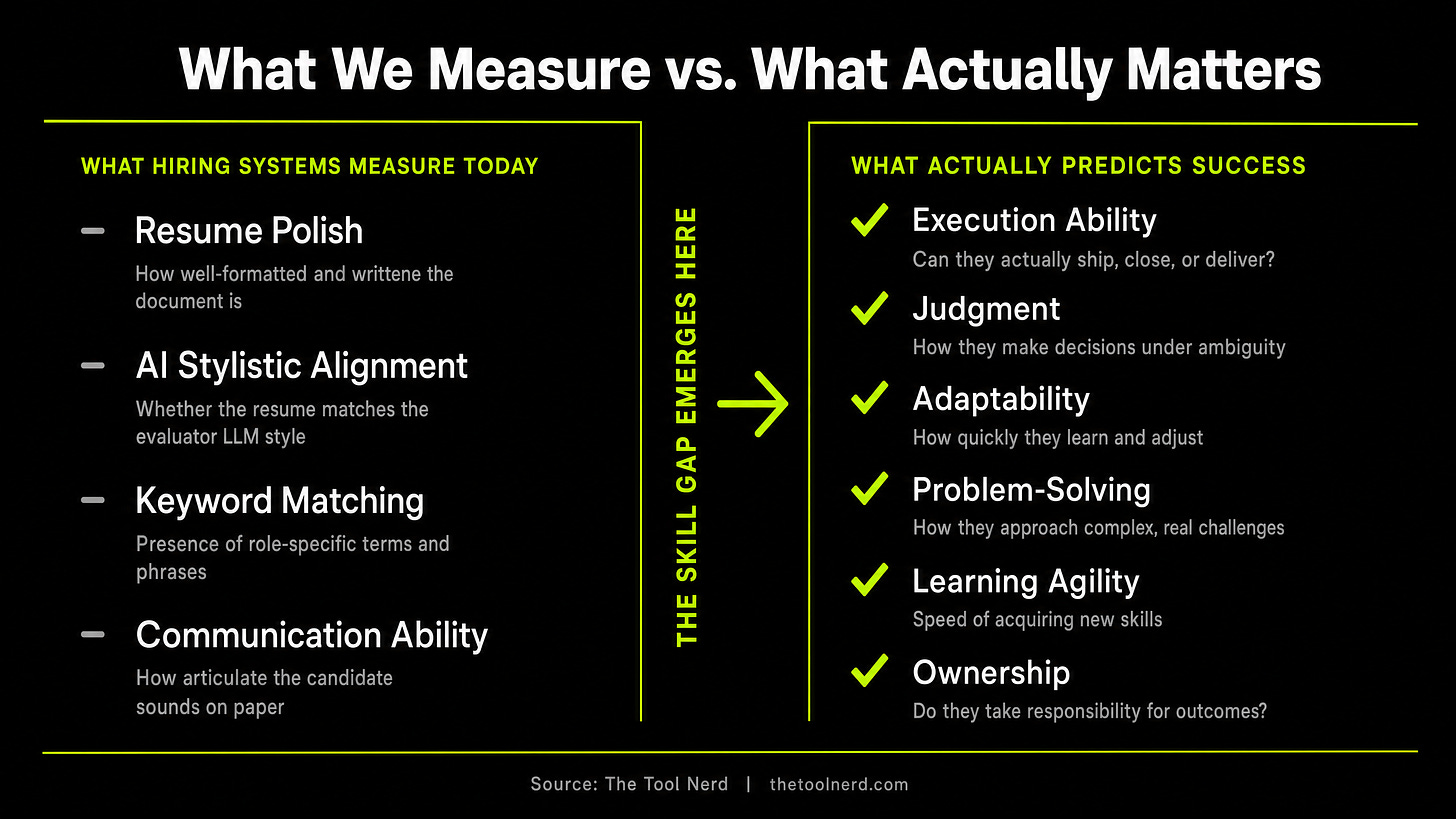

Right now, most hiring systems measure what is easy to parse: resume polish, AI stylistic alignment, keyword density, and communication ability. But what actually predicts whether someone succeeds in a role is execution ability, judgment, adaptability, and ownership.

There is a massive gap between those two lists. Think about the disconnect:

Resume quality doesn’t equal job capability. A beautiful resume doesn’t mean you can ship code, close deals, or manage a team under pressure.

Communication polish doesn’t equal execution ability. Someone can sound brilliant on paper and freeze when they need to make a real decision.

AI-optimized language doesn’t equal authentic expertise. You can use AI to make your resume sound like you have deep expertise in something you’ve never actually done.

We chose easy over accurate. And the consequence is predictable: candidates get shortlisted for presentation rather than capability.

"The candidates who succeed aren't always the ones with the best resumes. They're the ones with judgment, adaptability, and genuine ownership. Those qualities don't show up on a resume. They show up in how someone approaches a problem."

If this pattern continues, dominant AI styles will become entrenched in hiring pipelines. We already know from recent research that automated recruitment tools continue to enable systemic discrimination and demographic bias.

But now, we are layering an entirely new problem on top of it. We are decreasing diversity through stylistic bias. Applicant pools will start to look less like a diverse spectrum of human capability, and more like the generic outputs of whichever LLM is currently the most popular.

These are a few of the major reasons many organizations today experience a growing skill gap despite extensive hiring efforts. Candidates are often shortlisted based on communication ability, resume presentation, and AI-enhanced articulation. However, when actual business outcomes and execution responsibilities arise, organizations frequently discover gaps in technical depth, ownership, adaptability, and real-world capability.

So, how do we fix a system that’s optimising for the wrong things?

Mitigating AI Hiring Bias: 3 Actionable Strategies for Recruiters

We can’t go back to pure human judgment. It doesn’t scale. We can’t rely on resumes alone. They don’t predict capability. A different approach is needed.

1. From Resume-Based to Task-Based Screening

Give candidates meaningful work to complete. Real work that reveals how they think.

Then evaluate how they approach the problem. See how they use tools. See how they navigate ambiguity. See how they ask questions when they don’t understand something.

This is harder to scale than resume screening. But it’s infinitely more predictive. I wrote about a similar concept in my previous article on the Token Test- a different take on hiring engineers. Give candidates real work, and you’ll see who they actually are.

The Token Test: How the Best Companies Will Hire Engineers from Now On

Have you heard of the term Tokenmaxxing - a new weird trend being followed at companies like Meta, Salesforce, Microsoft etc.. In 30 days alone, Meta employees burned through 60.2 trillion tokens, which at standard API pricing would cost approximately $900 million.

2. From Presentation to Behavioural Evaluation

Stop optimising for resume polish. Start assessing alignment with company vision. Look for learning agility. Evaluate cultural contribution.

“The future of effective hiring may depend less on automated screening and more on strengthening recruiter judgment, hiring manager calibration, domain-based screening, and behavioral evaluation.”

Assess problem-solving orientation. Look for long-term capability, not short-term credentials. Evaluate authenticity and integrity. These don’t show up on a resume. They show up in how someone approaches a problem.

3. Mitigating AI Bias When You Do Use AI

If you’re going to use AI for screening, at least do it intelligently.

The research identified two simple interventions that work.

System prompting. Explicitly instruct the model to ignore the origin of the resume and focus only on substantive content. Tell it not to favour resumes that match its own style.

Ensemble voting. Don't rely on one giant model. Combine your primary evaluator (like GPT-4o) with smaller models that exhibit weaker self-recognition to dilute the bias.

These interventions reduce LLM-versus-human self-preference by 17% to 63% in relative terms. Not perfect. But substantial.

Closing

Hiring is one of the most consequential decisions an organization makes. Yet we’ve optimized it for efficiency at the expense of accuracy.

As hiring becomes more AI-assisted, the importance of human judgment becomes even more critical - not less.

AI can improve efficiency, but it cannot fully replace the human ability to identify potential, authenticity, integrity, and contextual fit. The challenge ahead for organizations is not merely managing AI bias- it is ensuring that hiring systems do not lose the ability to recognize real talent beneath AI-generated perfection.

Use AI for what it’s good at. Use humans for what they’re good at. And maybe, just maybe, you’ll actually hire the right people.

Co-authored with Vasumathi Adwant, Associate Director HR at Altir.

Explore AI tools for hiring and team building at tools.thetoolnerd.com

Related reading: The Token Test: How the Best Companies Will Hire Engineers from Now On

Source: Xu, J., Li, G., & Jiang, J.Y. (2026). AI Self-preferencing in Algorithmic Hiring: Empirical Evidence and Insights. arXiv:2509.00462v3.